Queues in Python

How to implement a FIFO queue data structure in Python using only built-in data types and classes from the standard library.

A queue is a collection of objects that supports fast first-in, first-out (FIFO) semantics for inserts and deletes. The insert and delete operations sometimes called enqueue and dequeue. Unlike lists or arrays, queues typically don’t allow for random access to the objects they contain.

Here’s a real-world analogy for a first-in, first-out queue:

Imagine a line of Pythonistas waiting to pick up their conference badges on day one of PyCon registration. New additions to the line are made to the back of the queue as new people enter the conference venue and “queue up” to receive their badges. Removal (serving) happens in the front of the queue, as developers receive their badges and conference swag bags and leave the queue.

Another way to memorize the characteristics of a queue data structure is to think of it as a pipe:

New items (water molecules, ping-pong balls, …) are put in at one end and travel to the other where you or someone else removes them again. While the items are in the queue, a solid metal pipe, you can’t get at them. The only way to interact with the items in the queue is to add new items at the back (enqueue) or to remove items at the front (dequeue) of the pipe.

Queues are similar to stacks and the difference between them is in removing items:

With a queue you remove the item least recently added (first-in, first-out or FIFO); and with a stack you remove the item most recently added (last-in, first-out or LIFO).

Performance-wise, a proper queue implementation is expected to take O(1) time for insert and delete operations. These are the two main operations performed on a queue and they should be fast in a correct implementation.

Queues have a wide range of applications in algorithms and to solve scheduling, as well as parallel programming problems. A short and beautiful algorithm using a queue is breadth-first search (BFS) on a tree or graph data structure.

Scheduling algorithms often use priority queues internally. These are specialized queues: instead of retrieving the next element by insertion time, a priority queue retrieves the highest-priority element. The priority of individual elements is decided by the queue based on the ordering applied to their keys.

A regular queue, however, won’t re-order the items it carries. You get what you put in, and in exactly that order (remember the pipe example?)

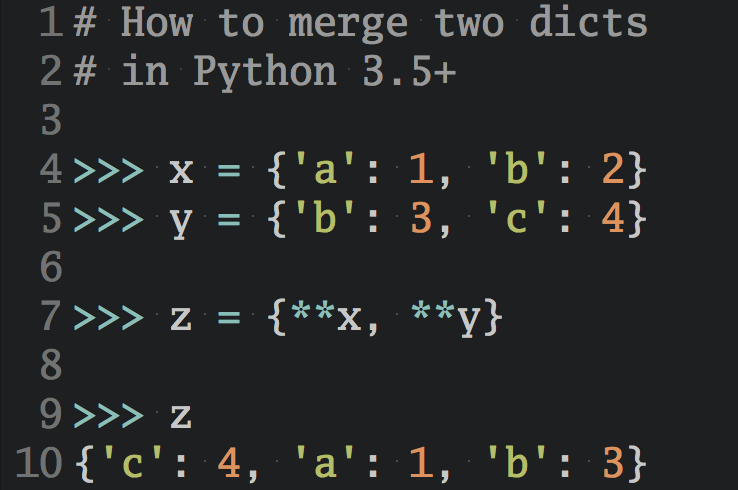

Python ships with several queue implementations that each have slightly different characteristics. Let’s take a look at them:

⛔ The list Built-in

It’s possible to use a regular list as a queue but this is not ideal from a performance perspective. Lists are quite slow for this purpose because inserting or deleting an element at the beginning requires shifting all of the other elements by one, requiring O(n) time.

Therefore I would not recommend you use a list as a makeshift queue in Python (unless you’re dealing with a small number of elements only).

# How to use Python's list as a FIFO queue: q = [] q.append('eat') q.append('sleep') q.append('code') >>> q ['eat', 'sleep', 'code'] # Careful: This is slow! >>> q.pop(0) 'eat'

✅ The collections.deque Class

The deque class implements a double-ended queue that supports adding and removing elements from either end in O(1) time.

Python’s deque objects are implemented as doubly-linked lists which gives them excellent performance for enqueuing and dequeuing elements, but poor O(n) performance for randomly accessing elements in the middle of the queue.

Because deques support adding and removing elements from either end equally well, they can serve both as queues and as stacks.

collections.deque is a great default choice if you’re looking for a queue data structure in Python’s standard library.

# How to use collections.deque as a FIFO queue: from collections import deque q = deque() q.append('eat') q.append('sleep') q.append('code') >>> q deque(['eat', 'sleep', 'code']) >>> q.popleft() 'eat' >>> q.popleft() 'sleep' >>> q.popleft() 'code' >>> q.popleft() IndexError: "pop from an empty deque"

✅ The queue.Queue Class

This queue implementation in the Python standard library is synchronized and provides locking semantics to support multiple concurrent producers and consumers.

The queue module contains several other classes implementing multi-producer, multi-consumer queues that are useful for parallel computing.

Depending on your use case the locking semantics might be helpful, or just incur unneeded overhead. In this case you’d be better off with using collections.deque as a general purpose queue.

# How to use queue.Queue as a FIFO queue: from queue import Queue q = Queue() q.put('eat') q.put('sleep') q.put('code') >>> q <queue.Queue object at 0x1070f5b38> >>> q.get() 'eat' >>> q.get() 'sleep' >>> q.get() 'code' >>> q.get_nowait() queue.Empty >>> q.get() # Blocks / waits forever...

✅ The multiprocessing.Queue Class

This is a shared job queue implementation that allows queued items to be processed in parallel by multiple concurrent workers. Process-based parallelization is popular in Python due to the global interpreter lock (GIL).

multiprocessing.Queue is meant for sharing data between processes and can store any pickle-able object.

# How to use multiprocessing.Queue as a FIFO queue: from multiprocessing import Queue q = Queue() q.put('eat') q.put('sleep') q.put('code') >>> q <multiprocessing.queues.Queue object at 0x1081c12b0> >>> q.get() 'eat' >>> q.get() 'sleep' >>> q.get() 'code' >>> q.get() # Blocks / waits forever...

A good default choice: collections.deque

If you’re not looking for parallel processing support the implementation offered by collections.deque is an excellent default choice for implementing a FIFO queue data structure in Python.

I’d provides the performance characteristics you’d expect from a good queue implementation and can also be used as a stack (LIFO Queue).

Read the full “Fundamental Data Structures in Python” article series here. This article is missing something or you found an error? Help a brother out and leave a comment below.